Extract ZIP files in Azure Data Lake Storage using Python

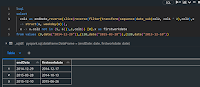

Hi There, This example shows how to extract a zip file in Azure ADLS using Python Recently I need to extract a Zip file into an ADLS storage from a Python script. Its the same approach if you are trying to do it from a DJango or Fast API service. The approach is to read the Zip files in memory in sequence and upload them to ADLS. Its unlike what we do in databricks where extract can be done through mount points but extracting a zip from a Python script or Service is a bit different. Its very simple and short. To run this I have created a ADLS gen2 Storage and a container named samples. """ Author: PREETish Reach me at: https://www.pritishranjan.com Queries: https://preetblogs.azurewebsites.net/aboutme Github: PreetRanjan """ import zipfile import io from azure . storage . filedatalake import FileSystemClient from datetime import datetime connection_string = "<your_connection_string>" file_system_client = FileSystemClient . fro...